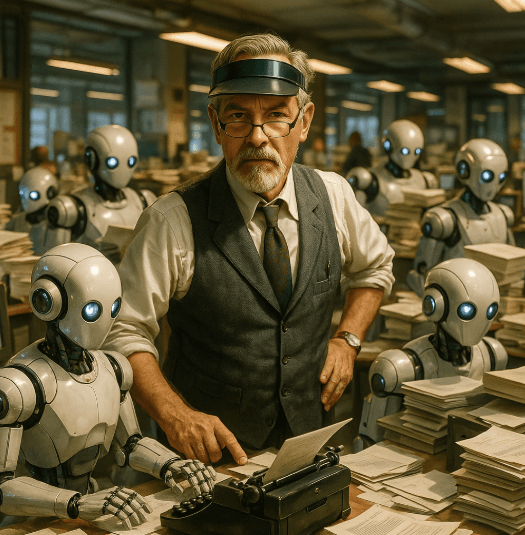

BoSacks Speaks Out: The Uncopyrightable Future and the Feedback Loop Nobody Wants to See

By Bob Sacks

Fri, Feb 27, 2026

I’m going to begin with a question I don’t have a definitive answer to, despite having spent a lot of time researching it. In my experience, those are the questions that actually matter.

Here it is.

If AI-generated content cannot be copyrighted, and it cannot, and publishers are firing human writers and replacing them with AI, and they are, what happens when the next generation of AI systems trains on that content?

And what happens to the generation after that?

I don’t think anyone has thought this through. Not seriously. Not all the way to the end. So let me give it a Bo-try.

We have to start with copyright, because everything else rests on it. And on this point, there is no ambiguity. The U.S. Copyright Office has been crystal clear. Copyright protects works of human authorship. If a piece of content is generated entirely by a machine, without meaningful human creative contribution, it is not eligible for copyright protection. Period.

This isn’t a gray area. It isn’t a policy proposal. It isn’t waiting for Congress. The Office has already rejected registrations for fully AI-generated works. Federal courts have upheld that position. The legal foundation goes all the way back to an 1884 Supreme Court decision that tied copyright to human creativity. That anchor has never moved.

Which leads to a conclusion many publishers seem determined not to look at directly.

When a media outlet fires its writers and replaces them with AI, it isn’t just cutting payroll. It is voluntarily walking away from intellectual property protection. Everything it publishes becomes, by default, legally ownerless. Anyone can copy it. Anyone can resell it. Anyone can scrape it and feed it into another AI system. The publisher has no standing to object. None.

This is not hypothetical. We’ve already seen the warning shots. Sports Illustrated made headlines in 2023 when it was caught publishing articles under fake AI-generated bylines, complete with invented author bios and profile photos. That was treated as a scandal.

What’s actually happening across the industry is quieter, and far more dangerous.

For every large outlet that gets caught, there are hundreds of smaller ones that have simply stopped hiring writers altogether. Editors no longer edit writing. They manage prompts. They route machine output. The human role has shifted from author to traffic cop. The content looks like journalism. It carries familiar brand names. But legally, it belongs to no one.

And now we get to the part that should be keeping people awake at night.

What happens when AI systems start training on AI-generated content at scale?

Researchers have a name for it. Model collapse. Others call it AI inbreeding. Different labels, same phenomenon. A recursive feedback loop in which the flaws, shortcuts, and hallucinations of one generation of AI output become the training data for the next generation, amplifying the damage each time around.

A study published in Nature described it bluntly. When models are trained on machine-generated data, output quality degrades. With each successive generation, the degradation accelerates. The lead researcher, an Oxford computer scientist, compared it to photographing a photograph, then printing it, then photographing it again. Eventually, the signal disappears into noise. You don’t get an image. You get a dark square.

Now look at the scale of the system we’re feeding into this loop.

By early 2025, nearly three-quarters of newly created web pages contained some AI-generated text. AI-written pages appearing in Google’s top search results nearly doubled in a single year. Automated “news” sites tracked by NewsGuard exploded from a few dozen to well over a thousand in roughly the same timeframe.

This matters because the web is the training ground. Future AI systems will not be trained on the internet as it once was. They will be trained on an internet increasingly composed of their own output. And because AI-generated content is uncopyrighted and legally ownerless, there is no friction, legal, economic, or moral, slowing its ingestion.

Which opens a legal void big enough to drive an industry through.

If AI-generated content has no copyright owner, no one can sue over its use. There is no infringement without a rights holder. So when an AI system scrapes oceans of machine-generated content, no one has standing to object. But when that same system produces degraded, misleading, or dangerous output as a result, who is responsible?

The publisher that generated the original content without copyright protection?

The AI company that trained on it?

The user who relied on the output?

As far as I can tell, current law doesn’t have a clean answer to any of those questions.

There is a final irony here, and it’s almost too dark to ignore.

By firing their writers, publishers aren’t just dismantling the human creative infrastructure that gave their brands value in the first place. They are actively poisoning the well from which the next generation of AI systems will drink. Epoch AI and related peer‑reviewed work project that high‑quality, publicly available human‑generated text could be fully utilized or effectively exhausted between 2026 and 2032, with earlier exhaustion possible under aggressive training or overtraining scenarios.

The companies that understand this, the ones sitting on deep archives of human-written, copyrighted material, are suddenly holding something extraordinarily valuable. The New York Times understood that when it sued OpenAI. Whether the rest of the industry figures it out before the archive of human expression is buried under an avalanche of machine-generated content is an open question.

I started with a question I don’t know how to answer.

I still don’t know.

But I am increasingly convinced that the publishers celebrating their AI cost savings today are setting fire to the ground they’re standing on.

And the rest of us are standing there too.

As always, I could be wrong. I have been wrong once or twice before.

But I don’t think I am.