BOSACKS SPEAKS OUT: When AI Becomes Worth Paying For

By Bob Sacks

Thu, Apr 2, 2026

Let’s stop whispering and get this over with.

The publishing industry didn’t stumble into this moment. It engineered it. Carefully. Methodically. Over decades.

We trained readers to expect everything for free. We optimized headlines for clicks instead of substance. We replaced reporters with dashboards, investigations with listicles, and institutional memory with “content strategy.” Then we stood around blinking in disbelief when readers stopped paying.

So yes, AI showing up in our newsrooms isn’t some hostile takeover. It’s succession planning.

We deserve this.

This week, a study reported by Futurism claimed that opinion pieces at the New York Times, the Wall Street Journal, and the Washington Post were more than six times as likely to contain AI-generated material as staff-written news stories. Let that sink in. Not fringe blogs. Not zombie local sites. The marble-column institutions that still like to think of themselves as journalism’s moral spine.

The flashpoint was a Modern Love contributor at the Times who admitted to using ChatGPT, Claude, and Gemini as “collaborative editors.” Not to write, she insisted. To guide. To inspire. To polish. To correct.

You can decide how much comfort that distinction offers.

But the real problem isn’t whether a chatbot suggested a metaphor or smoothed a sentence. The real problem is that the industry is asking the wrong question.

Everyone is stuck on how AI is being used.

The only question that matters to Bo is what happens when it works.

What happens when AI can help produce journalism that people are willing to pay for?

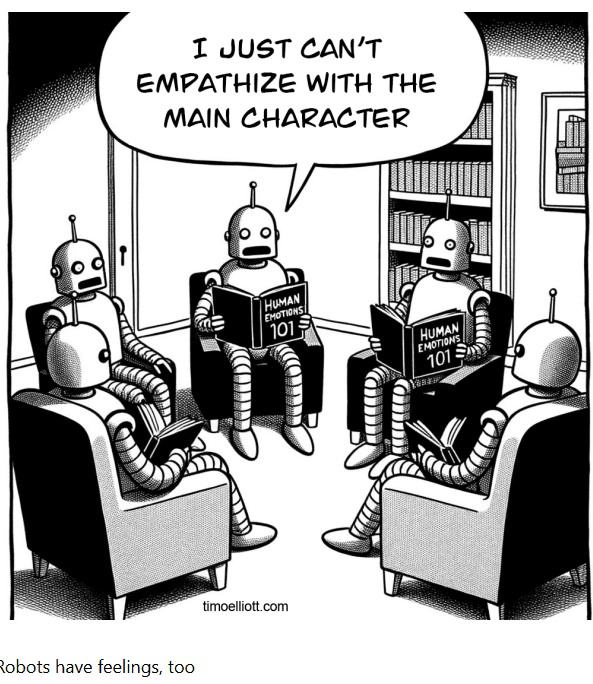

Because here is the part almost no one in publishing is willing to say out loud. I fully expect that AI will eventually write very well. Not just competently. Not just fluently. But with restraint, with empathy, and yes, with something that will look an awful lot like compassion.

Not because the machine feels anything. It doesn’t. But because compassion in writing has always been a discipline, not a miracle. It is attention. Proportion. Knowing when not to push, not to exploit, not to perform. Those qualities have been formalized for decades through stylebooks, editing layers, and newsroom norms. They are patterns. Machines learn patterns. It is naive to believe they will not learn these, too.

There is also a quiet historical precedent that makes today’s panic feel familiar. Newsrooms have been using primitive sentiment analysis for years, long before anyone called it AI. Obituary desks in particular developed rule-bound systems to flag tone, avoid unintended cruelty, and standardize language around death, illness, and loss. Editors learned that word choice could compound grief or soften it. Over time, those rules became guidelines. Guidelines became software checks. No one argued that obituaries lost their humanity because a system warned against the wrong adjective. They argued the opposite. That it prevented harm. What we are seeing now is not a break from history. It is the continuation of it, at scale.

When AI reaches that level, and it will, the industry loses its favorite defense. “It feels off” stops working. “I can tell” becomes wishful thinking. If the writing is accurate, fair, careful, and emotionally appropriate, then the objection is no longer aesthetic.

It becomes existential.

Because the outrage I keep hearing today is mostly about vibe. The prose feels hollow. Too smooth. Too bloodless.

Fine. Often true. For now.

But vibes are not a publishing strategy. And you cannot save an industry by insisting you can always spot the machine.

Consider the much-cited Ars Technica incident, where a reporter relied on a chatbot, published a hallucinated quote, and was fired after a public correction. That was not a story about AI. That was a story about negligence. About confusing convenience with process. About abandoning verification.

Journalists fabricated quotes long before silicon got involved. Stephen Glass didn’t need an algorithm to invent sources at The New Republic. He needed ambition and editors who trusted him too much.

The failure was oversight then. It is oversight now.

Meanwhile, entire communities across the country have lost their local newspapers. Not their “information ecosystem.” Their newspapers. No school board coverage. No zoning fights. No obituaries. No record of who stood up at the planning meeting and said something that mattered.

So when a study suggests that roughly nine percent of newly published articles may be partially or fully AI-generated, especially in smaller outlets, I don’t see a scandal.

I see triage.

The Associated Press has told staff that resistance is “futile.” The Washington Post runs AI-generated audio summaries. Bloomberg summarizes its own journalism with machines. The New York Times uses AI to test and write headlines.

This is not a debate about whether AI belongs in the newsroom. It already lives there.

The debate is whether publishers are going to be honest about it.

So far, they are not.

What we’ve gotten instead is managed ambiguity. “Tools.” “Assistance.” “Experimentation.” Language designed to delay the conversation that actually matters. Publishers are using AI while publicly expressing concern about AI, hoping the audience will not notice the contradiction.

That is not strategy. That is cowardice.

Readers, especially paying ones, are not stupid. They never were. They always figure it out.

So let me say what no communications department will.

AI-assisted journalism can be worth paying for.

Not because the machine is wise. It isn’t.

Not because it has judgment. It doesn’t.

Not because it feels compassion. It cannot.

It is worth paying for because it removes low-value labor so human beings can do high-value work.

Use AI to write the earnings brief.

Use it to summarize public records.

Use it to draft the weather box, the event calendar, the routine game recap.

Then take the human being who was doing those things and point them at the story that takes six months and makes someone powerful deeply uncomfortable.

That is the bargain.

That is how AI becomes worth paying for.

Every major technological shift in publishing followed this same arc. The tools improved. The panic flared. The excuses collapsed. And the real burden shifted upward to judgment.

The damage never came from technology.

It came from leaders who used technology to cut ambition instead of sharpen it.

AI is going to get very good. Better than most people expect and sooner than most editors are prepared to admit. When it does, machines will be able to write with care, restraint, and something that looks convincingly like compassion. That will not diminish journalism. It will clarify it. It will force news organizations to remember that their real value was never sentences alone, but judgment, courage, and the willingness to decide what truly matters. Used honestly, AI can give journalists back time, depth, and ambition. It can help rebuild coverage where nothing exists now and strengthen reporting where it barely survives. The publishers who understand this will not fear a future where machines write well. They will welcome it, because it allows humans to do what only humans ever could. Choose the stories. Protect the vulnerable. Confront power. And make journalism matter again.